[CS231n] Assignment 1 - Q2. Implement a Softmax Classifier

1. Preprocessing Datasets

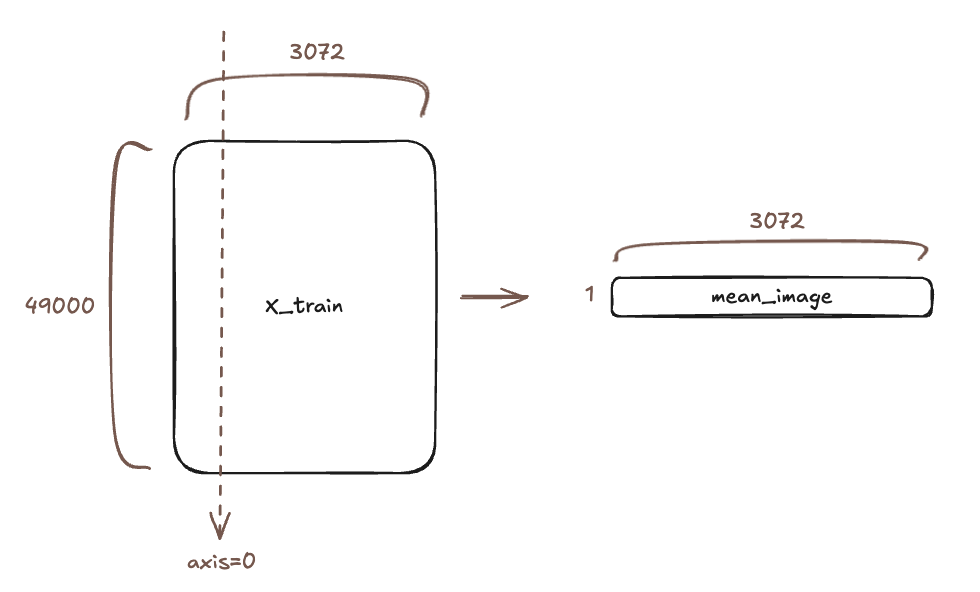

# preprocessing: subtract the mean image

# first: compute the image mean based on the training data

mean_image = np.mean(X_train, axis=0)

print(mean_image[:10]) # print a few of the elements

plt.figure(figsize=(4,4))

plt.imshow(mean_image.reshape((32,32,3)).astype('uint8')) # visualize the mean image

plt.show()

# second: subtract the mean image from train and test data

X_train -= mean_image

X_val -= mean_image

X_test -= mean_image

X_dev -= mean_image

We have already flattened the X_train dataset in the previous code, so X_train $\in \mathbb{R}^{49000 \times 3072}$.

Why subtract the mean image from the dataset?

- Center the data: Makes the average of each feature zero.

- Stabilizes training and helps gradient descent converge faster.

- Normalize brightness: Eliminates the common bias of “brightness” shared across images, allowing the model to focus on structural differences.

# third: append the bias dimension of ones (i.e. bias trick) so that our classifier

# only has to worry about optimizing a single weight matrix W.

X_train = np.hstack([X_train, np.ones((X_train.shape[0], 1))])

X_val = np.hstack([X_val, np.ones((X_val.shape[0], 1))])

X_test = np.hstack([X_test, np.ones((X_test.shape[0], 1))])

X_dev = np.hstack([X_dev, np.ones((X_dev.shape[0], 1))])

Here is an important trick for handling the bias term $b$. Recall the linear score function: \(f(x_i, W, b) = W x_i + b\) Computating $W$ and $b$ separately requires maintaining two distinct parameters (matrices and vectors), which complicates the implementation of gradients and updates.

We can use the Bias Trick to simplify this:

\[\mathbf{x}' = \begin{bmatrix} \mathbf{x} \\ 1 \end{bmatrix} \in \mathbb{R}^{D+1}, \qquad \mathbf{W}' = \begin{bmatrix} \mathbf{W} & \mathbf{b} \end{bmatrix} \in \mathbb{R}^{C \times (D+1)}\](Note: Dimensions may transpose depending on implementation. In our code, $X$ is $N \times D$, so we append 1 horizontally)

\[X' = \begin{bmatrix} X & \mathbf{1} \end{bmatrix} \in \mathbb{R}^{N \times (D+1)}, \quad W' = \begin{bmatrix} W \\ b^\top \end{bmatrix} \in \mathbb{R}^{(D+1) \times C}\] \[f(X') = X'W' = XW + \mathbf{1}b^\top = XW + b \in \mathbb{R}^{N \times C}\]By appending a column of ones to $X$, we absorb the bias $b$ into the weight matrix $W$. Now we only need to maintain a single matrix $W$.

2. Implementing softmax_loss_naive

#####################################################################

# TODO:

# Compute the gradient of the loss function and store it dW.

# Rather that first computing the loss and then computing the

# derivative,

# it may be simpler to compute the derivative at the same time that

# the loss is being computed. As a result you may need to modify some

# of the code above to compute the gradient.

#####################################################################

def softmax_loss_naive(W, X, y, reg):

"""

Softmax loss function, naive implementation (with loops)

Inputs have dimension D, there are C classes, and we operate on minibatches

of N examples.

Inputs:

- W: A numpy array of shape (D, C) containing weights.

- X: A numpy array of shape (N, D) containing a minibatch of data.

- y: A numpy array of shape (N,) containing training labels; y[i] = c means

that X[i] has label c, where 0 <= c < C.

- reg: (float) regularization strength

Returns a tuple of:

- loss as single float

- gradient with respect to weights W; an array of same shape as W

"""

# Initialize the loss and gradient to zero.

loss = 0.0

dW = np.zeros_like(W)

# compute the loss and the gradient

num_classes = W.shape[1]

num_train = X.shape[0]

for i in range(num_train):

scores = X[i].dot(W) # shape: (C,)

# compute the probabilities in a numerically stable way

# shift values for 'exp' to avoid numeric overflow

scores -= np.max(scores)

# Softmax Function

p = np.exp(scores)

p /= p.sum() # normalize

logp = np.log(p)

loss -= logp[y[i]] # negative log probability is the loss

# My Code

p[y[i]] -= 1

dW += np.outer(X[i], p)

# normalized hinge loss plus regularization

loss = loss / num_train + reg * np.sum(W * W)

# My Code

dW = dW / num_train + 2 * reg * W

return loss, dW

Before implementing the gradient code, let’s derive the gradient of the Softmax Loss.

The full loss function including regularization is:

\[L(W) = \frac{1}{N}\sum_{i=1}^{N} L_i(x_i, y_i, W) + \lambda R(W)\]Where for a single example $x_i$:

- Scores: $f = X_i W$ (where $f_k = X_i W_k$)

- Softmax Probability: $p_k = \frac{e^{f_k}}{\sum_j e^{f_j}}$

- Loss: $L_i = -\log(p_{y_i})$

2.1 Analytical Gradient Derivation

Our goal is to find $\frac{\partial L_i}{\partial W}$. We use the chain rule: \(\frac{\partial L_i}{\partial W} = \frac{\partial L_i}{\partial f} \frac{\partial f}{\partial W}\)

Step 1: Derivative w.r.t Scores ($f_k$)

Let $k$ be any class index. We want $\frac{\partial L_i}{\partial f_k}$.

Since $L_i = -\log(p_{y_i})$, we differentiating $-\log(\frac{e^{f_{y_i}}}{\sum e^{f_j}})$.

Case 1 : $k = y_i$ (Correct Class)

\[\begin{aligned} \frac{\partial L_i}{\partial f_{y_i}} &= \frac{\partial}{\partial f_{y_i}} (-\log p_{y_i}) = -\frac{1}{p_{y_i}} \frac{\partial p_{y_i}}{\partial f_{y_i}} \\ &= -\frac{1}{p_{y_i}} [p_{y_i}(1-p_{y_i})] = -(1-p_{y_i}) = p_{y_i} - 1 \end{aligned}\]Case 2 : $k \neq y_i$ (Incorrect Class)

\[\begin{aligned} \frac{\partial L_i}{\partial f_k} &= \frac{\partial}{\partial f_k} (-\log p_{y_i}) = -\frac{1}{p_{y_i}} \frac{\partial p_{y_i}}{\partial f_k} \\ &= -\frac{1}{p_{y_i}} [-p_{y_i}p_k] = p_k \end{aligned}\]Combine Cases : We can combine these into a single expression using the indicator function $\mathbb{1}(k=y_i)$:

\[\frac{\partial L_i}{\partial f_k} = p_k - \mathbb{1}(k=y_i)\]This is simply the probability vector minus the one-hot label vector. Let $\delta = p - y_{\text{onehot}}$. Then $\frac{\partial L_i}{\partial f} = \delta$.

Step 2: Derivative w.r.t Weights ($W$)

The score for class $k$ is $f_k = x_i \cdot W_k$ (dot product).

So, $\frac{\partial f_k}{\partial W_{d,k}} = x_{i,d}$. (Note: $f_k$ only depends on the $k$-th column of $W$).

Thus, for a specific weight element $W_{d,k}$:

\[\frac{\partial L_i}{\partial W_{d,k}} = \frac{\partial L_i}{\partial f_k} \frac{\partial f_k}{\partial W_{d,k}} = (p_k - \mathbb{1}(k=y_i)) x_{i,d}\]Step 3: Vectorized Gradient

Collecting this for all $d, k$, we get the outer product: \(\nabla_W L_i = x_i^T (p - y_{\text{onehot}})\)

(Dimension check: $x_i^T$ is $(D,1)$, $(p-y)$ is $(1,C)$ $\rightarrow$ $(D,C)$ matrix).

2.2 Final Gradient Formula

Averaging over $N$ examples and adding regularization:

\[\nabla_W L(W) = \frac{1}{N} \sum_{i=1}^{N} x_i^T (p_i - y_i^{\text{onehot}}) + 2\lambda W\]2.3 Evaluation

# Evaluate the naive implementation of the loss we provided for you:

from cs231n.classifiers.softmax import softmax_loss_naive

import time

# generate a random Softmax classifier weight matrix of small numbers

W = np.random.randn(3073, 10) * 0.0001

loss, grad = softmax_loss_naive(W, X_dev, y_dev, 0.000005)

print('loss: %f' % (loss, ))

# As a rough sanity check, our loss should be something close to -log(0.1).

print('loss: %f' % loss)

print('sanity check: %f' % (-np.log(0.1)))

2.4 Inline Question 1

Why do we expect our loss to be close to -log(0.1)?

Answer

Since $W$ is initialized with very small random numbers ($\approx 0$), the scores $f = XW$ will be approximately $0$. Consequently, the Softmax probabilities will be uniform across all classes: $$ p_k = \frac{e^{f_k}}{\sum_j e^{f_j}} \approx \frac{e^0}{\sum_{j=1}^{10} e^0} = \frac{1}{10} = 0.1 $$ The loss for each example is $Li = -\log(p_{y_i}) \approx -\log(0.1)$.

Enjoy Reading This Article?

Here are some more articles you might like to read next: